Introduction

Why Rubin-era densities lock in direct-to-chip cooling

Rubin-era planning isn’t about guessing one exact rack kW number. It’s about acknowledging a trajectory: rack-scale AI systems have already moved into liquid-cooled form factors, and each generation tightens the thermal and infrastructure envelope.

NVIDIA’s rack-scale, liquid-cooled GB200 NVL72 design is a concrete example of where the market is headed: dense compute packaged as a facility-integrated system, where “IT cooling” and “building cooling” are no longer separable decisions. For procurement teams, that’s exactly why a GPU liquid cooling playbook is useful: it forces you to specify the interfaces before the hardware arrives.

What decisions you must finalize before procurement

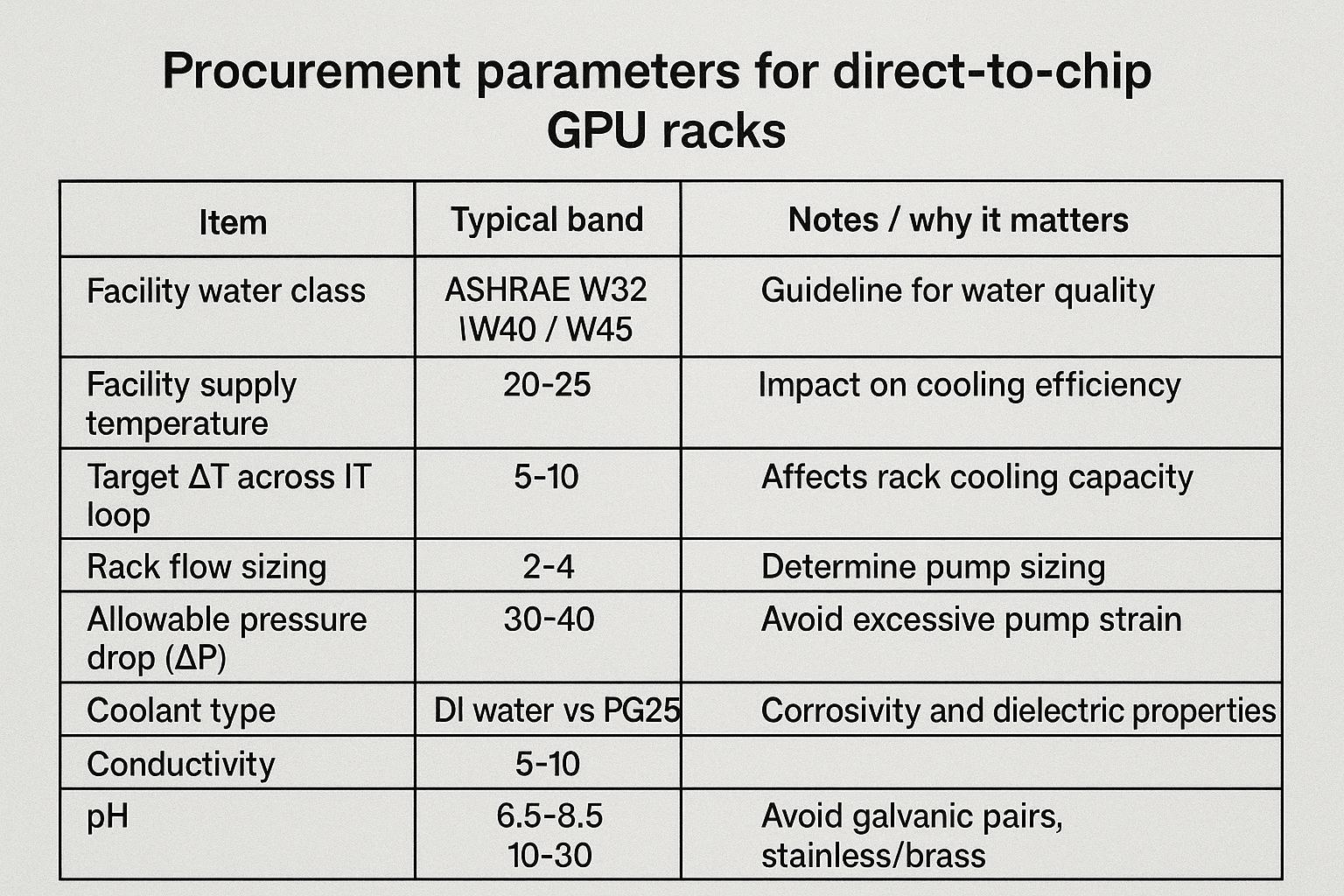

Before you issue an RFP (or accept a vendor’s reference design), lock down the facility↔IT handshake parameters. These are the items that cause redesigns late in the project:

-

Facility water temperature class (e.g., ASHRAE W32/W40/W45) and allowable seasonal setpoints

-

Flow and pressure envelope at the CDU (and at the worst-case rack branch)

-

Water quality / filtration / materials compatibility requirements

-

CDU topology and redundancy pattern (N, N+1, 2N) for pumps, heat exchangers, and controls

-

Rack manifold topology, quick-connect specification, and service/maintenance procedures

-

Leak detection, spill containment zones, and “safe state” logic for alarms

How this playbook turns guidance into RFP-ready specs

This playbook is organized as a decision framework. You can:

-

Choose the cooling method that matches your density and deployment model.

-

Translate that choice into facility water, CDU, and rack interface specs.

-

Validate the design with commissioning and acceptance tests that reduce SLA risk.

Key Takeaway: Treat liquid cooling as a multi-level system (chip → rack → CDU → facility heat rejection). If one level is underspecified, that gap will surface as schedule risk during integration.

Platform trajectory and densities

From Blackwell to Rubin: rack power trends

Public signals already show rack power density rising fast in AI training and inference clusters. Industry observers note that rack-scale AI systems have reached 100 kW-class footprints, with discussion moving toward even higher densities.

Two practical implications for procurement teams:

-

Air becomes a deployment constraint at higher rack kW because the airflow, fan power, and room-level heat removal requirements escalate sharply.

-

Liquid becomes the “primary thermal transport” while air shifts to residual heat, networking, and room hygiene.

For a grounding reference point, NVIDIA positions GB200 NVL72 as a rack-scale, liquid-cooled system (see the GB200 NVL72 product page referenced above). Analysts covering GTC-era architectures also emphasize that rack density is “skyrocketing” (Dell’Oro commentary on GPU compute architecture).

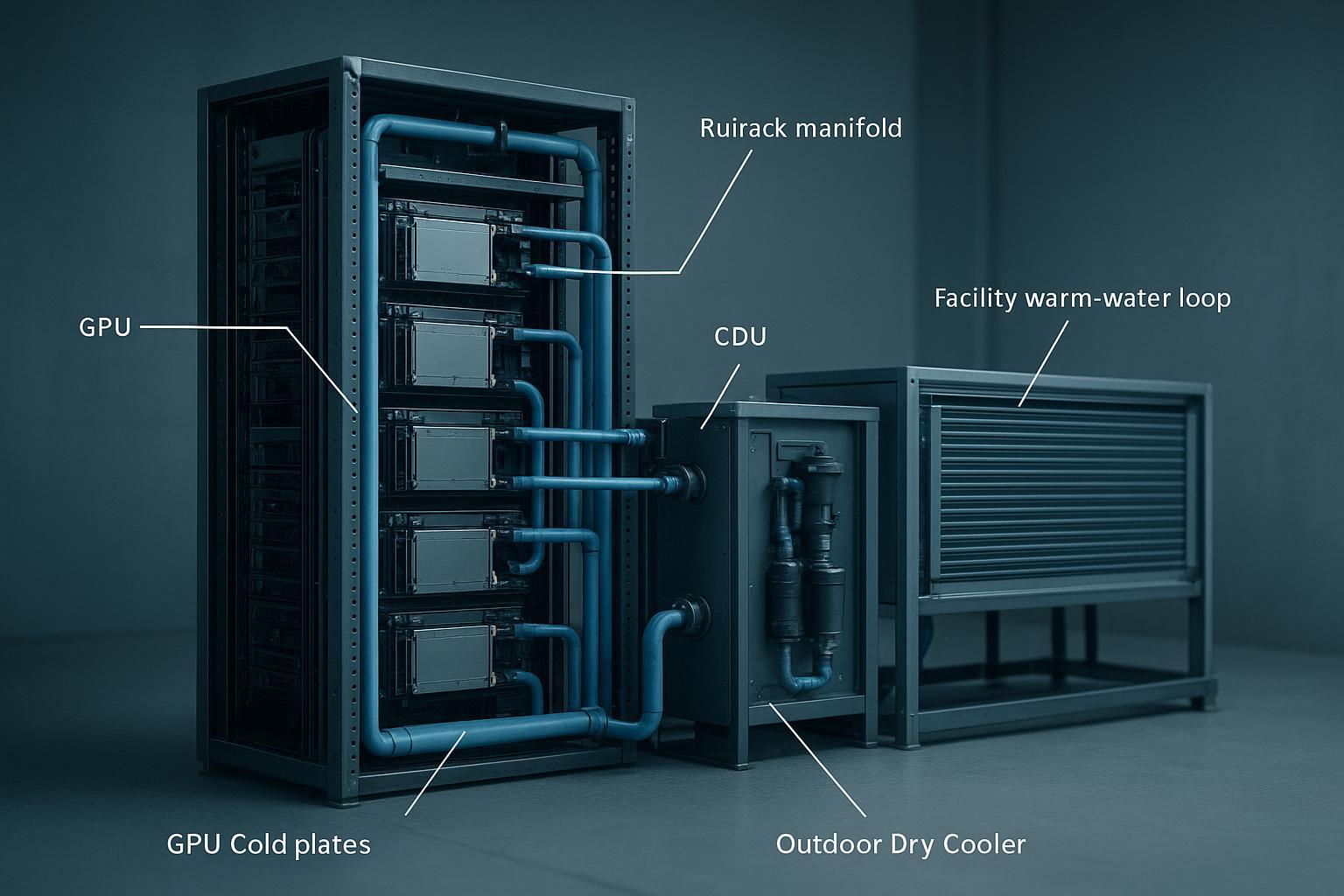

Heat flux implications for cold plates and manifolds

As chip and module heat flux rises, cold plates stop being commodity parts. What matters is the whole hydraulic chain:

-

Cold plate microchannel performance and allowable pressure drop

-

Distribution uniformity across parallel branches (preventing the “hot server at the end of the header” problem)

-

Manifold and quick-connect geometry that avoids throttling flow

-

Control logic that responds to transient load swings without hunting (oscillation)

Planning bands for per-accelerator/server/rack

Use planning bands in your RFP to keep options open without committing to a single SKU:

-

Per accelerator: specify allowable inlet temperature range, target ΔT band, and min flow per device class.

-

Per server: specify maximum allowable pressure drop from rack header to server return.

-

Per rack: specify full-load heat capture percentage by liquid loop (e.g., “liquid loop removes the dominant fraction of GPU/CPU heat”) and how residual heat is handled by air.

Avoid writing a spec that assumes one vendor’s exact flow number. Instead, specify the envelope and require proof at acceptance testing.

Cooling method decision matrix

Key Takeaway: In most Rubin-era planning scenarios, direct-to-chip liquid cooling is the baseline. The real decision is whether you add rear-door assist for mixed rooms, or consider immersion for highly standardized operations.

Direct-to-chip vs rear-door assist vs immersion

Use this matrix as a starting point for evaluation.

-

Direct-to-chip (DTC): Cold plates on GPUs/CPUs; a CDU controls the IT loop and exchanges heat to facility water.

-

Best fit when you need predictable operations, serviceability, and compatibility with mainstream server ecosystems.

-

This is the dominant architecture for high-density GPU rows, because it removes heat at the source with fewer room-level side effects (see the DataCenterDynamics discussion linked earlier).

-

-

Rear-door heat exchanger (RDHx) assist: Captures rack exhaust heat at the door; often paired with partial DTC.

-

Best fit when you’re upgrading a mixed room and want to reduce room heat load without immediately converting every server loop.

-

-

Immersion: Servers submerged in dielectric fluid.

-

Best fit when the organization is ready for a different maintenance paradigm and can standardize on immersion-ready hardware and procedures.

-

A practical framing is that direct-to-chip is becoming the default for AI racks because it scales performance with fewer room-level side effects (DataCenterDynamics on direct-to-chip’s rise).

When air still matters in mixed environments

Even in liquid-first racks, air doesn’t disappear. Plan explicitly for:

-

Residual heat from memory, NICs, storage, and power conversion

-

Network and cable management thermal hygiene

-

Room ventilation and dew point control (condensation risk management)

If your deployment includes both legacy and liquid-ready racks, treat “air path integrity” as a first-class requirement for the whole room.

Key tradeoffs for GPU liquid cooling at edge sites

Edge and modular sites (<100 kW) create different constraints than hyperscale halls:

-

Fewer onsite specialists → you need safer service modes, clearer alarms, and faster isolation

-

Tighter utility envelopes → warm-water economization and dry coolers become more attractive

-

Faster deployment cycles → prefabricated skids and standardized interfaces reduce schedule risk

Facility water, flow, and pressure

ASHRAE W3/W4 envelopes and supply/return setpoints

ASHRAE’s liquid cooling classes are commonly used to describe the facility water supply temperature range available to a CDU heat exchanger. Recent guidance emphasizes the “W-class” naming (e.g., W32/W40/W45) and the importance of clear compliance definitions (Upsite summary of ASHRAE 5th edition liquid cooling updates).

For procurement, the critical move is to specify:

-

The facility water supply temperature band you can provide (seasonal min/typical/max)

-

The maximum facility return temperature you can accept (and whether it affects heat rejection capacity)

-

The approach temperature you expect across the CDU heat exchanger at rated load

Warm-water operation is often pursued to maximize economization hours and reduce or eliminate chiller dependency; ASHRAE-aligned industry discussion describes a durable roadmap for ~30°C-class coolant strategies (“30°C Coolant — A Durable Roadmap for the Future” PDF).

In practice, a warm-water cooling loop shifts your engineering effort from “how cold can I get the supply” to “how stable can I keep the hydraulic envelope” (flow, ΔP, approach temperature, and alarm logic) across seasons.

Flow-rate bands and ΔT sizing for GPU liquid cooling

Instead of treating flow as a fixed “magic number,” tie it to heat load and allowable temperature rise:

-

Higher ΔT reduces required flow (and pumping power), but it tightens device-level thermal margins.

-

Lower ΔT increases flow and can increase pump energy and pressure-drop risk.

For RFP purposes, define:

-

Target ΔT band across the IT liquid loop under steady full load

-

Minimum flow requirement at the worst-case branch (farthest rack/server)

-

Maximum allowable ΔP across rack distribution and server connections

Water quality, filtration, and material compatibility

Water quality is a reliability requirement, not a “nice-to-have.” In DTC systems, fouling or corrosion can quickly show up as increased ΔP or reduced heat transfer.

Define procurement-grade requirements for:

-

Filtration (with a documented maintenance plan)

-

Chemistry monitoring (conductivity/pH) and lab sampling cadence

-

Material compatibility rules to reduce corrosion and galvanic risk

CDUs and redundancy patterns

In-rack vs in-row vs room CDU selection

Think of CDU placement as a trade between standardization, serviceability, and hydraulic complexity:

-

In-rack CDU: tight integration; can simplify modular deployments but may reduce service space and complicate rack swaps.

-

In-row CDU: shared capacity across multiple racks; often a good compromise for small rows and edge modules.

-

Room CDU: centralized plant-like approach; operationally clean but can add piping complexity for small footprints.

If you need a refresher on CDU functions (pumping, filtration, heat exchange, controls), see the explainer on CDU for liquid cooling optimization.

N, N+1, and 2N for pumps, HX, and power feeds

Procurement needs to force clarity on redundancy.

-

N: lowest cost, highest operational risk; generally only acceptable for non-critical or test pods.

-

N+1: common default (pump redundancy, control redundancy, and partial capacity margin).

-

2N: used where the workload and SLA justify full mirrored paths.

Specify whether redundancy is required at:

-

Pump sets (including independent power feeds)

-

Heat exchanger modules (ability to maintain capacity with one HX out)

-

Controls and sensors (single controller shouldn’t be a hard stop)

Controls, isolation, and service bypass design

Require a service mode that allows maintenance without draining a whole pod:

-

Isolation valves that segment racks/rows

-

Bypass paths that prevent dead-heading pumps

-

Alarms that drive a predictable “safe state” (throttle, isolate, or shutdown)

For commissioning discipline, sources emphasize that liquid cooling requires a strict facility–IT handshake and observability (for example, XD Thermal’s guide to direct-to-chip operations).

Manifolds, quick-connects, and materials

Header topology and valve/isolation strategy

Your manifold/header design determines whether flow distribution is stable under real operations.

Procurement-spec items:

-

Supply/return header sizing and allowable velocity

-

Balancing approach (orifice plates, balancing valves, or controlled branches)

-

Isolation strategy: rack-level isolation plus service isolation at QD clusters

Dry-break couplings, ratings, and cycle life

Quick-connects are a high-risk interface because they’re touched during service.

Use engineering selection criteria that favor reliability:

-

Non-spill / dry-break behavior (to minimize drip during disconnect)

-

Low pressure drop at nominal flow

-

Mechanical durability and repeatable seal performance

-

Materials compatibility with coolant chemistry

Quick coupling manufacturers summarize these as core requirements—optimal flow, non-spill disconnect, compactness, durability, and material compatibility (CEJN: 5 key requirements for quick couplings). ASHRAE TC 9.9 also discusses dry-break quick disconnects as a common component in water-cooled server designs (ASHRAE TC 9.9 water-cooled servers whitepaper PDF).

Leak detection and spill containment zones

Leak response is an operational system, not a sensor SKU.

Define zones and responses:

-

Server zone: detect early, isolate the server/branch

-

Rack manifold zone: detect and isolate the rack; route leaks away from electronics

-

CDU zone: detect, alarm, and isolate while preserving as much service as safe

Where helpful for internal practice, you can reference your own safety controls write-up—for example, Liquid cooling safety risks and proven controls.

Heat rejection and energy strategy

Dry coolers, adiabatic assist, and economizers

In warm-water designs, dry coolers and economizers become primary heat rejection paths. Adiabatic assist can extend capacity during hotter hours at the cost of water usage and added maintenance.

Procurement questions to force early:

-

What is the design ambient for full load (and is adiabatic allowed)?

-

What is the acceptable approach temperature in summer vs shoulder seasons?

-

What happens during smoke/dust events or water restrictions?

Chiller-less operation with warm-water loops

The practical objective is often “chiller-less most of the year,” not “chiller-less always.” Warm-water loops can reduce compressor hours and simplify the plant.

The best RFP language is to specify the operating modes:

-

Economizer mode (dry cooler only)

-

Hybrid mode (dry cooler + adiabatic)

-

Contingency mode (mechanical assist if present)

Seasonal setpoints and capacity margin planning

Setpoints are not static. Require a seasonal plan:

-

Supply temperature targets by month (or by ambient band)

-

Capacity margin at worst-case ambient

-

Transient response: how the loop behaves when training load steps up

Controls, monitoring, and commissioning

Telemetry: temperature, flow, ΔP, and chemistry

At minimum, specify telemetry at three layers:

-

CDU: supply/return temperatures, pump status, flow, ΔP across HX, alarms

-

Rack: header temps, rack flow, rack ΔP, leak detection state

-

Chemistry: conductivity and pH trend, plus sampling points

Alarms, SOPs, and failover testing

Alarms should drive SOPs that a distributed operations team can execute.

Require:

-

Alarm thresholds with hysteresis (avoid flapping)

-

Clear “safe state” behavior per alarm class

-

Periodic failover tests for redundant pumps and controls

Acceptance tests and documentation for handover

Acceptance testing is where specs become real.

Include:

-

Flow verification at worst-case branch

-

Full-load thermal step tests (power step and recovery)

-

Leak detection functional tests

-

Sensor calibration evidence

Commissioning guidance often recommends using liquid-cooled load banks to emulate rack loads and capture thermal/hydraulic behavior before production IT is fully populated (Avtron: liquid-cooled load banks for data center readiness).

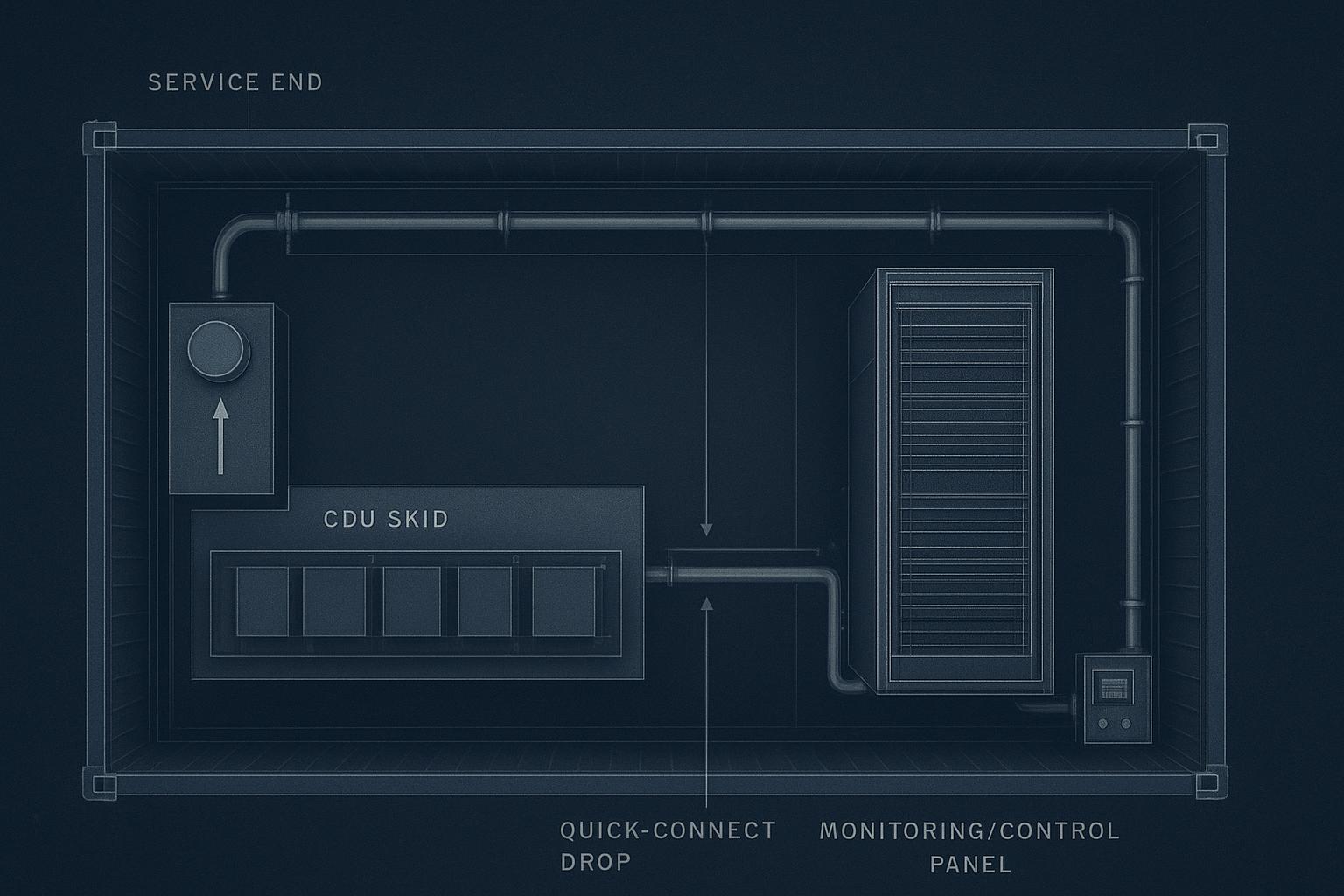

Modular and containerized integration (<100 kW)

Prefabricated skids, headers, and quick deployment

For sub-100 kW edge sites, standardization matters more than customization.

A practical pattern is to deploy a prefabricated “liquid utility spine” that includes:

-

A CDU skid (pumps + HX + filtration)

-

Dual headers (supply/return) with isolation and drains

-

Monitoring points and a simple control panel

This reduces onsite piping work and makes the facility↔IT handshake repeatable across many small deployments.

Structural allowances and service clearances

Container footprints are unforgiving. Reserve space for:

-

Front-access maintenance at the CDU skid

-

Hose bend radius and quick-connect service clearance

-

Spill containment and drainage routing

Please incorporate Coolnet’s integrated CDU–manifold–monitoring skid example here (non-promotional, educational)

As an educational example of the prefab pattern, Coolnet offers integrated skids that combine a CDU function (pumping + heat exchange), manifold/header distribution, and monitoring points in a single packaged unit. The important takeaway for buyers isn’t the brand—it’s the interface discipline:

-

One defined facility-water connection set (supply/return, design flow, design ΔP)

-

One defined rack distribution interface (header sizes, isolation, quick-connect drop strategy)

-

One defined monitoring set (temperature/flow/pressure/chemistry points) that can be mapped into your DCIM/BMS

If you choose a packaged skid approach, require the vendor to provide:

-

P&ID and point list

-

Alarm matrix with safe-state behavior

-

Bypass/isolation drawings

-

Commissioning procedure and acceptance criteria

Conclusion

What to standardize now for Rubin-era readiness

Standardize the handshake:

-

W-class / supply-return envelope you will support

-

A consistent CDU redundancy and control philosophy

-

A consistent rack manifold + QD specification and service mode

-

A consistent commissioning and acceptance test package

How to phase upgrades and de-risk deployment

If you’re migrating from mixed environments:

-

Start with a liquid-ready row or modular pod where you can validate real operations.

-

Use acceptance tests to lock down flow/ΔT/ΔP behavior before scaling.

-

Expand with repeatable skids, headers, and documentation packages.

A good place to start is standardizing your own internal “liquid cooling deployment playbook” and linking it to existing building blocks such as Coolnet’s liquid cooling solutions and CDU interface concepts discussed in Coolant Distribution Units (CDU) for data center cooling.

Where to validate numbers with vendors and standards

Validate every “typical band” against:

-

Your server vendor’s liquid cooling requirements

-

ASHRAE liquid cooling guidance and interpretation

-

Your CDU vendor’s performance curves (including approach temperature and pump maps)

If you want, you can also compile an RFP appendix that converts this playbook into a one-page “facility interface requirements” checklist.

English

English 中文

中文 العربية

العربية español

español

IPv6 network supported

IPv6 network supported